UPDATE 4/11/2022 This has been fixed!

UPATE 4/3/2022 Still broke…waiting on product to fix.

UPDATE 3/4/2022 Microsoft product group will be pushing a fix out in 2 weeks to Azure Gov. I asked what the cause was, but nothing yet.

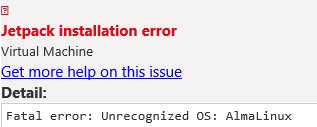

One of the great features of Azure Policy is the capability to audit OS settings for security baselines and compliance checking. I was deploying RHEL 8.4 and noticed the Guest Assignment was always hung in the pending state. I had no issues with Ubuntu, so it had to be something happening on the RHEL vm.

I navigated to /var/lib and saw the GuestConfig folder created, but when I was inside, it was empty. Hrm, this should be populated with folders and MOF files.

[root@rhel84 GuestConfig]# pwd

/var/lib/GuestConfig

[root@rhel84 GuestConfig]# ls -al

total 4

drwxr--r--. 2 root root 6 Feb 26 22:13 .

drwxr-xr-x. 41 root root 4096 Feb 26 22:13 ..

Next step was to tail the messages log to see if anything can pin point what is actually happening.

[root@rhel84 GuestConfig]# tail -f /var/log/messages | grep -i GuestConfiguration

Feb 26 22:27:36 rhel84 systemd[7442]: gcd.service: Failed at step EXEC spawning /var/lib/waagent/Microsoft.GuestConfiguration.ConfigurationforLinux-1.25.5/GCAgent/GC/gc_linux_service: Permission denied

Feb 26 22:27:46 rhel84 systemd[7458]: gcd.service: Failed at step EXEC spawning /var/lib/waagent/Microsoft.GuestConfiguration.ConfigurationforLinux-1.25.5/GCAgent/GC/gc_linux_service: Permission denied

Alright, a permission denied. It’s something to start looking into, but I was confused why this is happening. I headed over to Azure commercial and spun up a RHEL 8.4 vm with the same Azure Policy to execute my security baseline. Well, to my surprise, everything worked just fine. Looking at /var/lib/GuestConfig showed the Configuration folder with mof files. Looking at the Guest Assignments, it was showing NonCompliant, so I know it is OK there. I did notice the Guest Extension in commercial is using 1.26.24 and gov is using 1.25.5. I tried deploying that version with no auto upgrade in gov, but same error.

After some research, I set selinux to permissive mode and instantly the Configuration folder was created and starting pulling the mof files down. OK, now I am really puzzled. Working with Azure support, they were able to reproduce this same issue in Gov, but not in commercial. I was shocked no other cases have been open. I am not sure when this problem started happening, but this means security baselines on RHEL 8.x+ are not working.

While I wait for Microsoft to investigate more why this is happening, I tried to find a workaround. Knowing it is selinux causing the issue, I thought I could just create a policy allowing the execution of the gc_linux_service.

I tested first by making sure selinux is set to Enforcing then using chcon to set the selinux context:

[root@rhel84 GuestConfig]# getenforce

Enforcing

chcon -t bin_t /var/lib/waagent/Microsoft.GuestConfiguration.ConfigurationforLinux-1.25.5/GCAgent/GC/gc_linux_service

We’re all good. No error’s in the messages log. Since this could revert by a restorecon command being ran later, I added it to the selinux policy by running:

semanage fcontext -a -t bin_t /var/lib/waagent/Microsoft.GuestConfiguration.ConfigurationforLinux-1.25.5/GCAgent/GC/gc_linux_service

restorecon -v /var/lib/waagent/Microsoft.GuestConfiguration.ConfigurationforLinux-1.25.5/GCAgent/GC/gc_linux_service

I will update my post once Microsoft comes back with a reason why this is only happening in Azure Gov and see what proposed solution they have. For now, i’d not depend on the Guest Extension to perform your compliance checking for RHEL 8.x until a fix has been pushed.