Out of the box, an Azure Function will setup its connections using an access key to talk with its storage account. If creating a new queue trigger, it’ll just setup the connection to use that same shared access key. That is not ideal and we should be using some form of AAD to authenticate. Let’s take a look at managed identities and how to actually make this work in Azure Government.

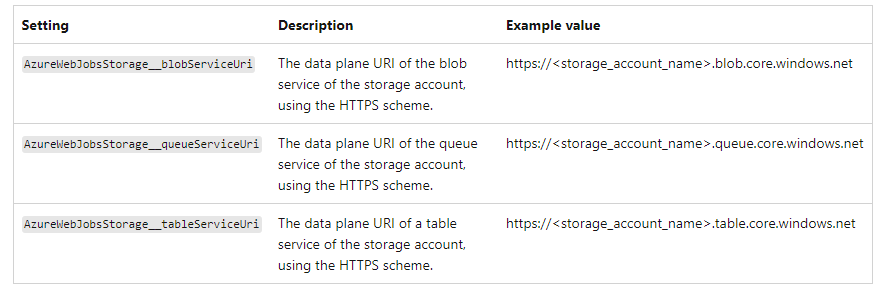

Straight out of Microsoft’s documentation If you’re configuring AzureWebJobsStorage using a storage account that uses the default DNS suffix and service name for global Azure, following the https://.blob/queue/file/table.core.windows.net format, you can instead set AzureWebJobsStorage__accountName to the name of your storage account. The endpoints for each storage service will be inferred for this account. This won’t work if the storage account is in a sovereign cloud or has a custom DNS.

Well, Azure Government is nixed from this neat feature, but how do we use an identity based connection? We need to reference in the function configuration a new setting that says to use a managed identity when my trigger is a blob/queue. In my example, I want to use a storage account named sajimstorage with a queue called my-queue. Now, pay attention because here are the undocumented tidbits for Azure Gov. Your value needs to be in the format of AzureWebJobs[storageAccountName]__queueServiceUri. AzureWebJobs is static and must always be there, next comes the name of your storage account then 2 underscores along with queueServiceUri. The value should be the endpoint of your queue with no trailing slash!

Next, we must ensure the function.json connection name is set to the name of your storage account. This ties back to the value above which will be parsed out correctly by the function.

There are more steps, but I figured it would be easier to actually give an example.

- Create a new Azure consumption function. I am creating a PowerShell Core runtime for this example.

- Create your storage account you want to use the functions managed identity with.

- In your function, enable the system assigned managed identity.

- In your storage account you want to use the managed identity with, RBAC the roles Storage Queue Data Reader and Storage Queue Data Message Processor

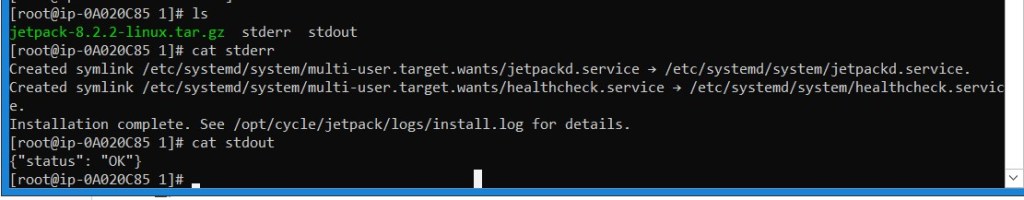

- Open up KUDU for your function and navigate in your debug console to site/wwwroot

- Edit requirements.psd1 and add ‘Az.Accounts’ = ‘2.12.0’

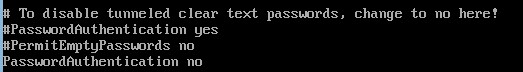

Don’t uncomment ‘Az’ = ’10.*’ because it’ll bring every module and take forever. Just use what you need. Also, do not use 2.* for Az.Accounts because it breaks the managed identity. This is a longer discussion, but visit https://github.com/Azure/azure-powershell/issues/21647 and see all the on going issues. I was explicitly told 2.12.0 by Microsoft as well. - Edit profile.ps1 and add -Environment AzureUSGovernment to your connect-azaccount. It should look like this: Connect-AzAccount -Identity -Environment AzureUSGovernment

- Back in the Azure Function Configuration, add a new application setting following the format of AzureWebJobs[storageAccountName]__queueServiceUri ie) AzureWebJobssajimstorage__queueServiceUri (2 underscores) and set the value to the endpoint of your queue: https://sajimstorage.queue.core.usgovcloudapi.net

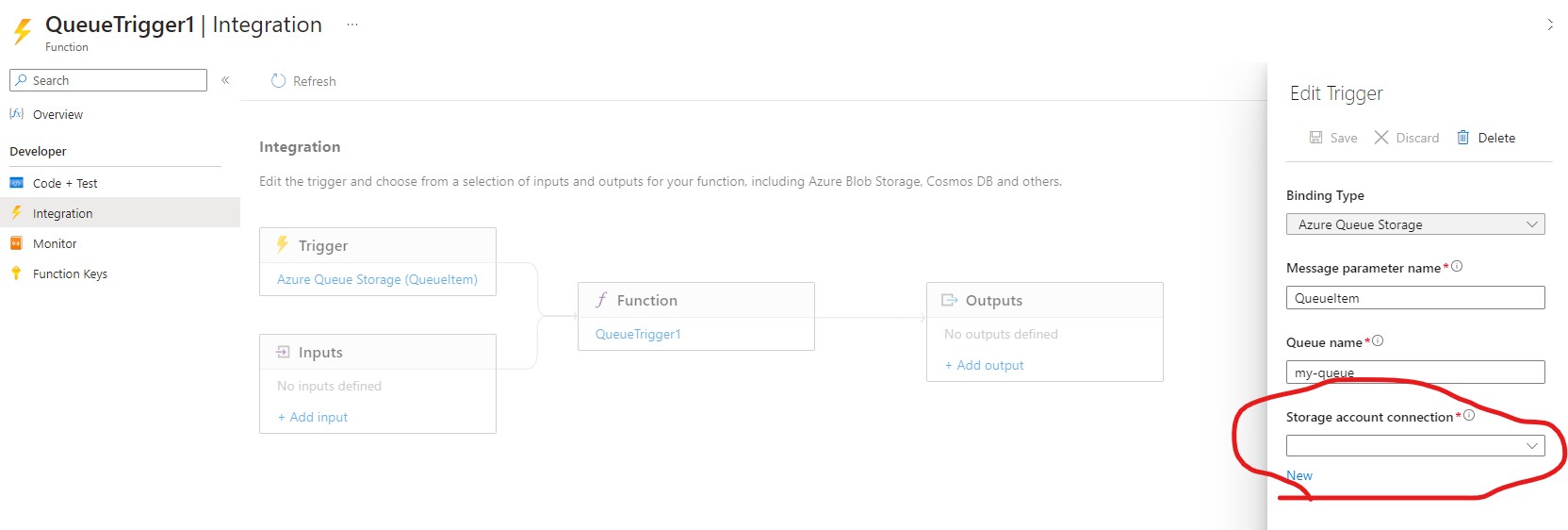

- Create your queue function app. It will default to using the shared access key, but let’s change that. Open the Code+Test, select the dropdown from run.ps1 and select function.json. Change the “connection”: “AzureWebJobsStorage” to “connection”: “sajimstorage”

Change the queue name if you want. Out of the box, it is ps-queue-items, but I am going to change it to my-queue. Save your changes. - Restart your function from the overview.

- Add a test message onto your queue and view the output from your function invocations traces.

Something to note, if you navigate to Integration and select your trigger, the storage account connection will be empty. This is normal.

It is not as painful as it looks. I mean, maybe when I was trying to figure this all out, but hopefully this saves you some time! Cheers.